Niels Doorn, Ph.D. student

My research on improving teaching and learning strategies of software testing in computer science education.

Blog

- Writing in Valencia

- Presenting article at ENASE 2025 in Porto

- Couple of days working on Schiermonnikoog

- Visiting the CSEE&T conference and presenting our article

- Serious Gaming Summer School Tallinn

- Poster at NWO ICT.OPEN 2024

- Presenting at INTED 2024

- Testing day 2023

- European Summer School on Science Communication

- Presentation at OURsi event

- EASE Conference and LEARNER workshop

- The Romanian Testing Conference

- Lightning talk at VERSEN SEN 23

- Presentation at RCIS 2023

- Presentation at Vakdidactiek Informatica event

- Poster at NWO ICT.OPEN 2023

- Presentation at ICST 2023

- Presentation at NIOC 2023

- Poster and presentation at ITiCSE 2022

- Working in Linz

- Summer holiday

- Switching off Twitter

- Working from a boat

Orcid

My Orcid ID is: 0000-0002-0680-4443.

Mastodon

I sometimes toot about my research on my Mastodon account @niels76@mastodon.online about my research, but more often about other things that interest me, or that fill me with wonder.

GitHub

Some of my projects can be found on GitHub.com/nielsdoorn. Feel free to contribute.

Running / sports

Follow me on Strava! I like to go for a run now and then. It helps me to think and also to clear my mind. I often get my best ideas while working out, but I also tend to forget most of them immediately.

Other interests

☕ 🧘 🌳 🐱 🐔 🥞 🚲 📷

TILE Repository

Came here for the Test Informed Learning with Examples repository? Look no further! It can be found at tile-repository.github.io/.

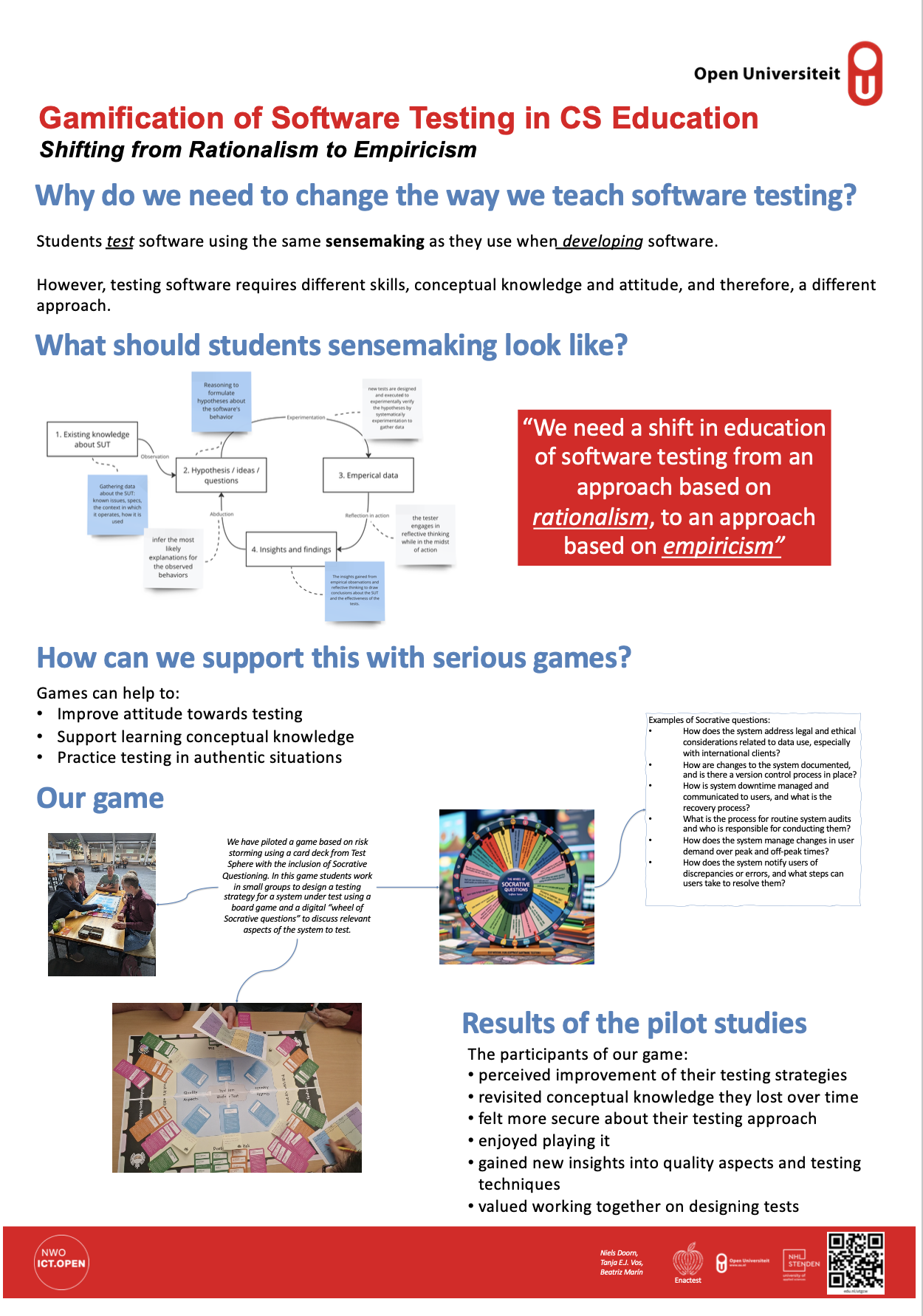

Poster at NWO ICT.OPEN 2024

by Niels Doorn

Our poster at NWO ICT.OPEN 2024 won third prize in the poster awards!

The abstract for this poster reads:

This poster highlights our research into using a serious game to help teaching computer science students software testing to shift from an approach stemmed in rationalism to one more stemmed in empericism. In the industry, software testing is widely recognized as the default way to assess software quality. Many studies have been performed to demonstrate the need to improve testing education from the beggining of Computer Science related degrees. However, for various reasons, Computer Science educators struggle to effectively include software testing in their curricula.

To fill the gap of novel techniques for testing education, our prior research revealed that students often adopt a so-called ‘developer approach’ in creating tests for software systems, utilizing primarily their conceptual knowledge from their programming courses. These students see testing as a problem-solving task, rooted in rational thinking. We advocate that software testing should not only be done from a ratiolism perspective, but also from a empericism perspective. Testing should be like small scientific experiments, where students use heuristics and exploration to form hypotheses about how the system should work, and then experiment to test these hypotheses and analyze the system’s feedback.

To support educators in reaching these learning outcomes, we began using gamification and developing a serious game. We use socrative questioning to elicitate critical thinking skills of the students to identify risks concerning the system under test. The anecdotal results from pilot studies suggest that incor- porating such interactive learning methods in computer science programs could change how software testing is understood and experiences by students.